LangChain Changelog

LangSmith Agent Builder has been renamed to LangSmith Fleet. The new name is reflected across the platform.

You can now pin any experiment as your baseline, so every subsequent run is automatically measured against it. Instead of manually selecting experiments for comparison, your chosen baseline persists across all future runs.

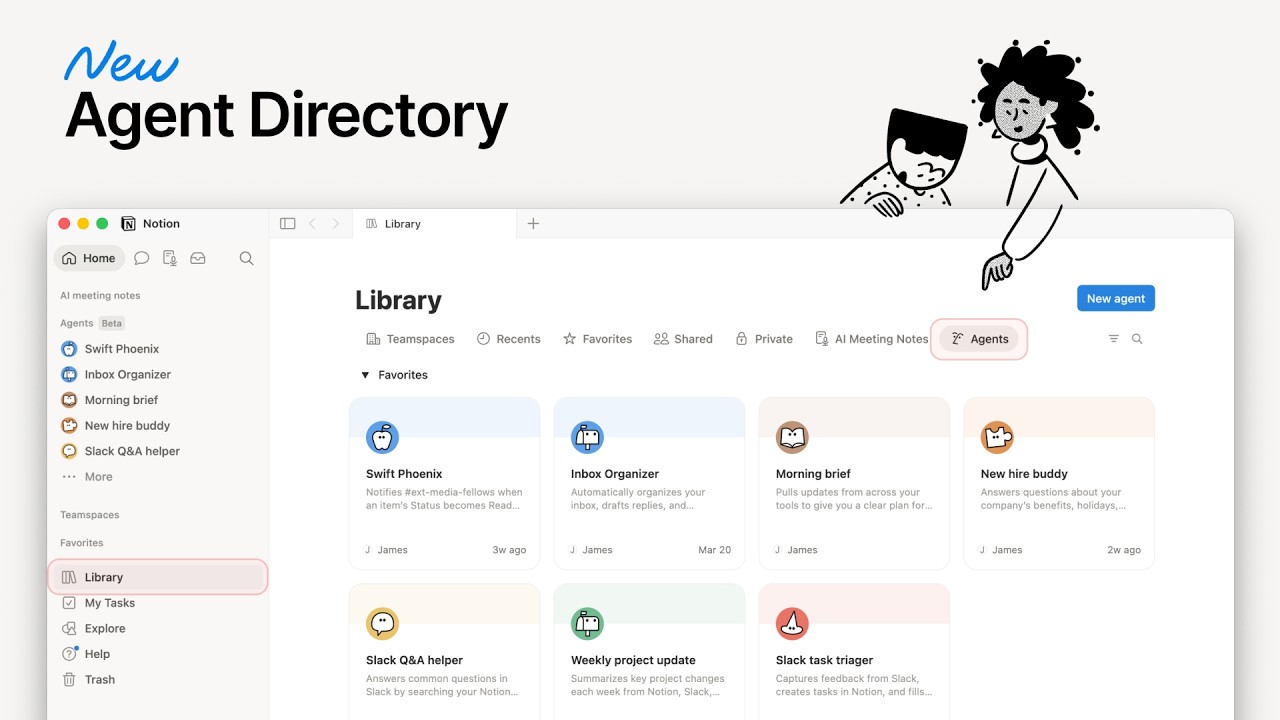

Agent Builder enhancements centered around collaboration. There's now a central Chat agent with access to file uploads and a tool registry, making working with an agent feel like working with a teammate.

The LangSmith Insights Agent now runs on your schedule, no manual triggers required. Set a recurring cadence (daily, weekly, or a custom cron expression) and the agent generates reports automatically.

deepagents v0.4 ships pluggable sandbox support, smarter conversation history summarization, and Responses API as the default for OpenAI models. Agents now have enhanced capabilities for complex tasks.

You can now configure which parts of a trace's inputs and outputs appear directly in the tracing table. This is especially useful for projects with custom data structures.